现代卷积神经网络

写在前面

参考书籍

Aston Zhang, Zachary C. Lipton, Mu Li, Alexander J. Smola. Dive into Deep Learning. 2020.

简介 - Dive-into-DL-PyTorch (tangshusen.me)

现代卷积神经网络

source code: NJU-ymhui/DeepLearning: Deep Learning with pytorch (github.com)

use git to clone: https://github.com/NJU-ymhui/DeepLearning.git

/modernCNN

AlexNet.py VGG.py NiN.py GoogLeNet.py tensor_normalize_self.py tensor_normalize_lib.py

AlexNet

code

import torch |

output

Conv2d output shape: torch.Size([1, 96, 54, 54]) |

使用块的网络VGG

VGG可用于启发设计深层神经网络。

经典卷积神经网络的基本组成部分是下面的这个序列:

-

带填充以保持分辨率的卷积层

-

非线性激活函数,如ReLU

-

汇聚层,如最大汇聚层

一个VGG块与之类似,由一系列卷积层组成,后面再加上用于空间下采样的最大汇聚层; 8.2. Networks Using Blocks (VGG) — Dive into Deep Learning 1.0.3 documentation (d2l.ai)

code

import torch |

output

Sequential output shape: torch.Size([1, 64, 112, 112]) |

网络中的网络NIN

原理及与VGG的比较见8.3. Network in Network (NiN) — Dive into Deep Learning 1.0.3 documentation (d2l.ai)

code

import torch |

output

Sequential output shape: torch.Size([1, 96, 54, 54]) |

含并行连接的网络GoogLeNet

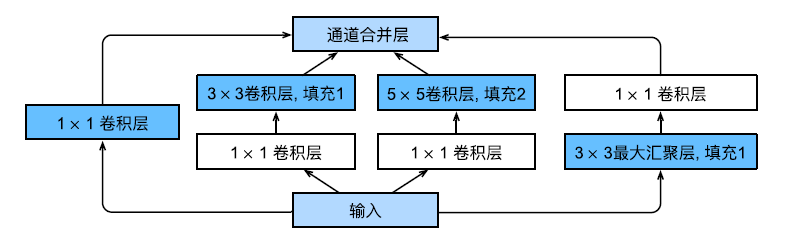

GoogLenet的一个重要观点是:有时使用不同大小的卷积核组合是有利的。在GoogLeNet中,基本的卷积块被称为Inception块。一个Inception块的示例如下:

这个Inception块由四条并行路径组成,前三条路径使用窗口大小为1×1、3×3和5×5的卷积层,从不同空间大小中提取信息。中间的两条路径在输入上执行1 × 1卷积,以减少通道数,从而降低模型的复杂性。第四条路径使用3 × 3最大汇聚层,然后使用1 × 1卷积层来改变通道数。这四条路径都使用合适的填充来使输入与输出的高和宽一致,最后我们将每条线路的输出在通道维度上连结,并构成Inception块的输出。在Inception块中,通常调整的超参数是每层输出通道数。

现在我们来实现这样一个GoogLeNet:

code

import torch |

output

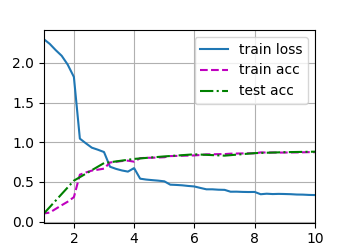

Sequential output shape: torch.Size([1, 64, 24, 24]) |

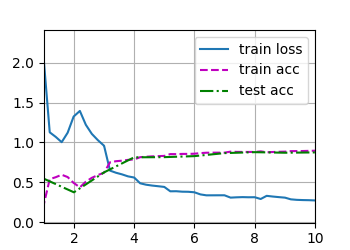

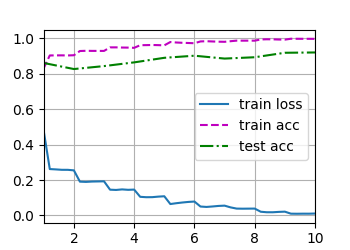

批量规范化

训练深层神经网络十分困难,特别是希望在短时间内使它们收敛。批量规范化是一种有效的技术,可以加速深层神经网络的收敛。

理论部分见8.5. Batch Normalization — Dive into Deep Learning 1.0.3 documentation (d2l.ai)

从零实现批量规范化层

下面实现一个具有张量的批量规范化层。

code

import torch |

output

# TODO |

简洁实现的批量规范化层

code

from torch import nn |

output

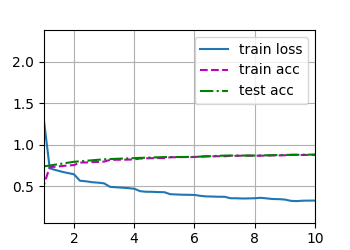

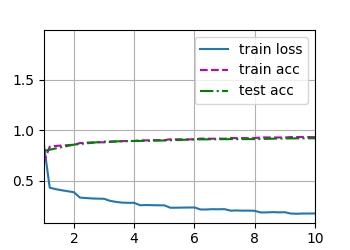

training on cpu |

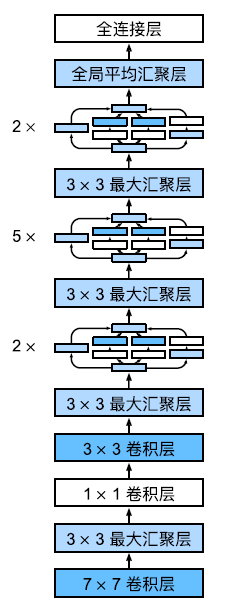

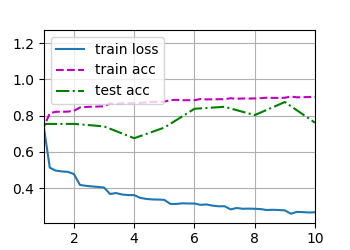

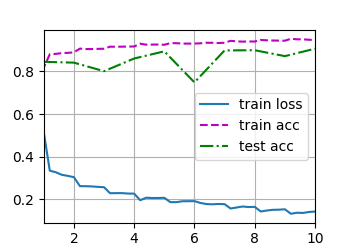

残差网络ResNet

组件:

- 残差块

- ResNet模型

原理见8.6. Residual Networks (ResNet) and ResNeXt — Dive into Deep Learning 1.0.3 documentation (d2l.ai)(比较抽象)

code

import torch |

output

# TODO |

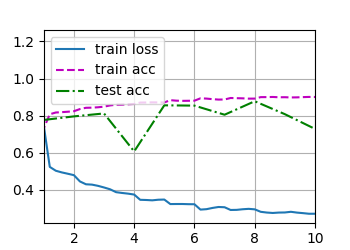

稠密连接网络DenseNet

组件:

- 稠密块体

- 过渡层

- DenseNet模型

原理见8.7. Densely Connected Networks (DenseNet) — Dive into Deep Learning 1.0.3 documentation (d2l.ai)

code

import torch |

output

torch.Size([4, 23, 8, 8]) |

(•‿•)